Caribbean Startup Summit Puts Region In Global Spotlight

June 26, 2019

Why You Should Treat Your Book Launch Like a Startup Minimum Viable Product

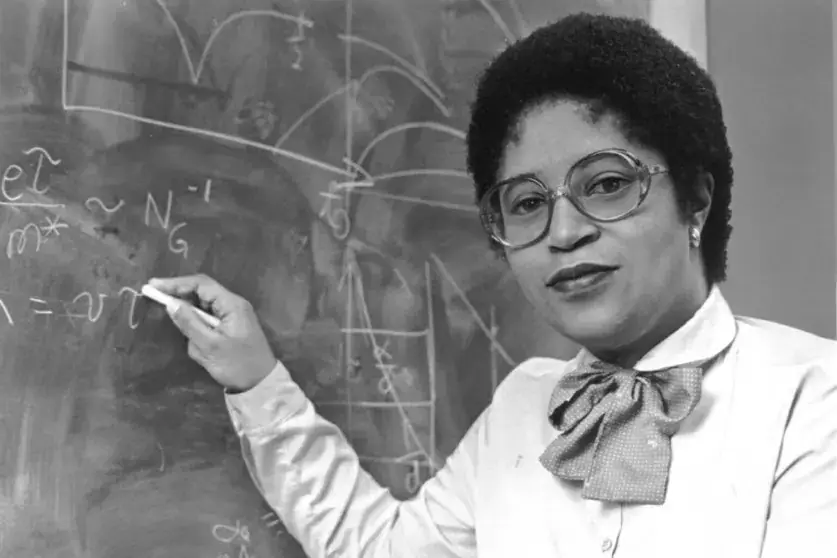

July 9, 2019Ruha Benjamin is an associate professor of African American studies at Princeton University, and lectures around the intersection of race, justice, and technology. She founded the Just Data Lab, which aims to bring together activists, technologists, and artists to reassess how data can be used for justice. Her latest book, Race After Technology, looks at how the design of technology can be discriminatory.

Where did the motivation to write this book come from?

It seems like we’re looking to outsource decisions to technology, on the assumption that it’s going to make better decisions than us. We’re seeing this in almost every arena – healthcare, education, employment, finance – and it’s hard to find a context which it hasn’t penetrated.

Something which really sparked my interest was a series of headlines and articles I saw which were all about a phenomenon dubbed “racist robots”. Then, as time went on, these articles and headlines became less surprised, and they started to say, of course, the robots are racist because they’re designed in a society with these biases.

The idea that software can have prejudice embedded in it is known as algorithmic bias – how does it amplify prejudice?

Many of these automated systems are trying to identify and predict risk. So we have to look at how risk was assessed historically – whether a bank would extend a loan to someone, or if a judge would give someone a certain sentence. The decisions of the past are the input for how we teach software to make those decisions in the future. If we live in a society where police profile black and Latinx people, that affects the police data on who is likely to be a criminal. So you’ll have these communities overrepresented in the data sets, which are then used to train algorithms to look for future crimes or predict who’s seen to be higher risk and lower risk.

Are there other areas of society – such as housing or finance – where the use of automated systems has resulted in biased outcomes?

Policing and the courts are getting a lot of attention, as they should. But there are other areas too, such as Amazon’s own hiring algorithms, which discriminated against women applicants, even though gender wasn’t listed on those résumés. The training set used data about who already worked at Amazon. Sometimes, the more intelligent machine learning becomes, the more discriminatory it can be – so in that case, it was able to pick up gender cues based on other aspects of those résumés, like their previous education or their experience.

Read More Here.

Join the UrbanGeekz community for the latest in tech, innovation, entrepreneurship, culture, and opportunities. Subscribe here.